The road to the space coast (looking back on the ideas that led to Euclid)

On Florida’s Space Coast, the Euclid satellite is undergoing the final preparations for launch on a Falcon 9 rocket next Saturday, July 1st at 11:12 EDT. Although the Euclid mission was approved by ESA in 2011, the origins of the project date back more than a decade before that, starting with the realisation that the expansion of the Universe is accelerating.

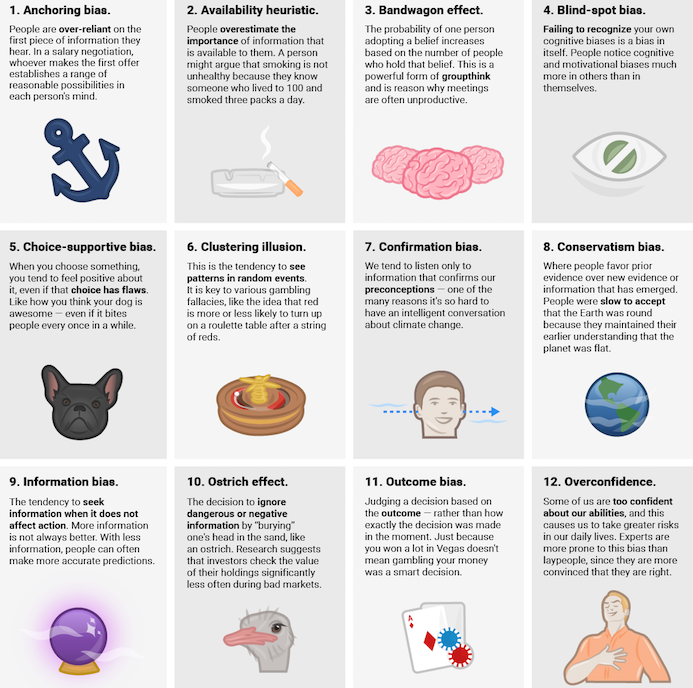

In cinema, great discoveries are usually accompanied by the leading lights throwing their hands in the air and exclaiming, “This changes everything!” But in real life, scientists are cautious, and the first reaction to any new discovery is usually: is there a mistake? Is the data right? Did we miss anything? You need to think carefully about finding the right balance between double-checking endlessly (and getting scooped by your competitors) or rushing into print with something that is wrong. At the end of the 1990s, measurements of distant supernovae suggesting the accelerated expansion of the Universe were initially greeted by scepticism.

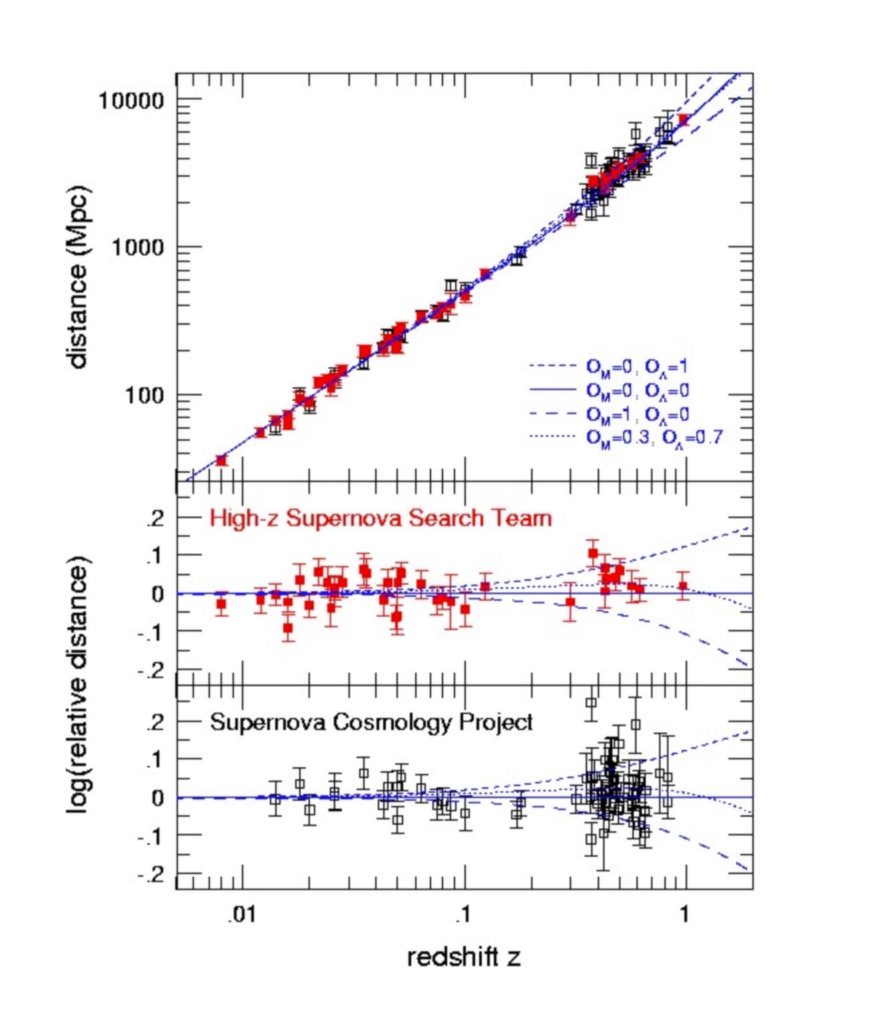

Conceptually, what those measurements were saying was simple: the further away an object is, the faster it is receding from us. Edwin Hubble’s early observations of galaxies demonstrated that there was a straight-line relationship between the distance of an object and the speed of movement. The most simple explanation (although one that scientists took a while to accept) was that the Universe was expanding.

Over the next few decades, researchers embarked on a long quest to find different classes of objects for which they could estimate distances. Supernovae were one of the best: it turned out that if you could measure how the brightness of supernovae changed with time, you could estimate their distances. You could then compare how the distance depended on redshift, which you could measure with a spectrograph. Wide-field cameras on large telescopes allowed astronomers to find supernovae further and further away, and by the end of the 90s, samples were large enough to detect the first tiny deviations from Hubble’s simple straight-line law. The expansion was accelerating. The origin of the physical process of expansion was codified by “Lambda”. Or “dark energy”.

But those points on the right-hand side of the graphs which deviated from Hubble’s straight-line law had big error bars. Everyone knew that supernovae were fickle creatures in any case, subject to a variety of poorly understood physical mechanisms that could mimic the effect that the observers were reporting.

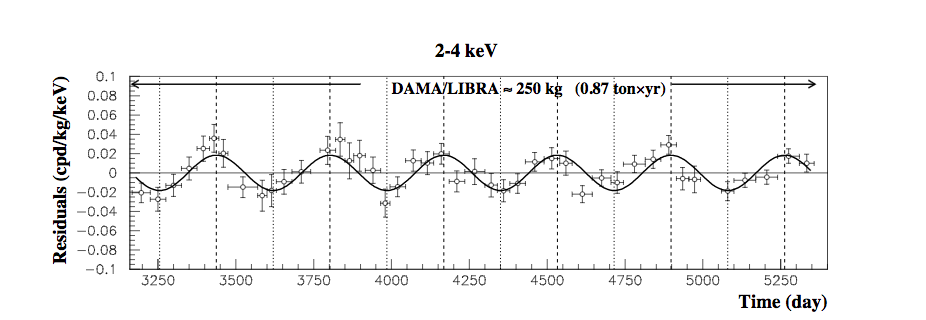

Initially, there was a lot of resistance to this idea of an accelerating Universe, and to dark energy. Nobody wanted Lambda. Not the theorists, because there were no good theoretical explanations for Lambda. And not the simulators, because Lambda unnecessarily complicated their simulations. And not even the observers, because it meant that every piece of code used to estimate physical properties of distant galaxies had to be re-written (a lot of boring work). Meanwhile, the supernovae measurements became more robust and the reality of the existence of Lambda become harder and harder to avoid. But what was it? It was hard to get large samples of supernovae, what other techniques could be used to discover what Lambda really is? Soon, measurements of the cosmic microwave background indicated that Lambda was indeed the preferred model, but because the acceleration only happens at relatively recent epochs in the Universe, microwave background observations only have limited utility here.

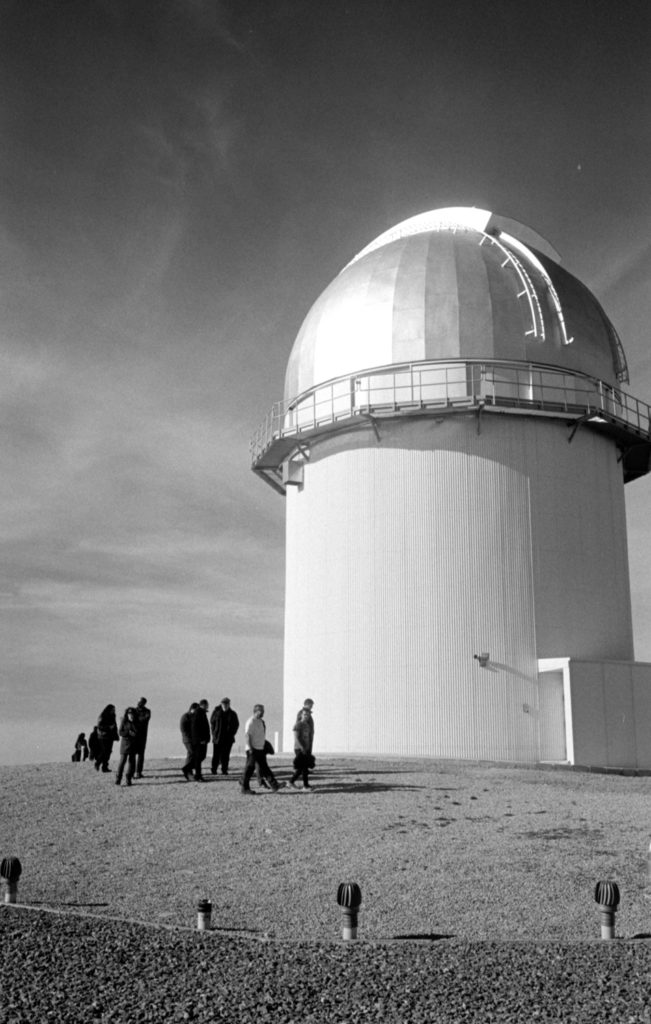

Meanwhile, several key instrumental developments were taking place. At the Canada France Hawaii Telescope and other observatories, wide-field cameras with electronic detectors — charge coupled devices, or CCDs — were being perfected. These instruments enabled astronomers for the first time to survey wide swathes of the sky and measure the positions, shapes and colours of tens of thousands of objects. At the same, at least two groups were testing the first wide-field spectrographs for the world’s largest telescopes. Fed by targets selected from the new wide-field survey cameras, these instruments allowed the determination of the precise distances and physical properties of tens of thousands of galaxies. This quickly led to many new discoveries of how galaxies form and evolve. But these new instruments would also allow us to return to the still-unsolved nature of the cosmic acceleration, using a variety of new techniques which were first tested with these deep, wide-field surveys.

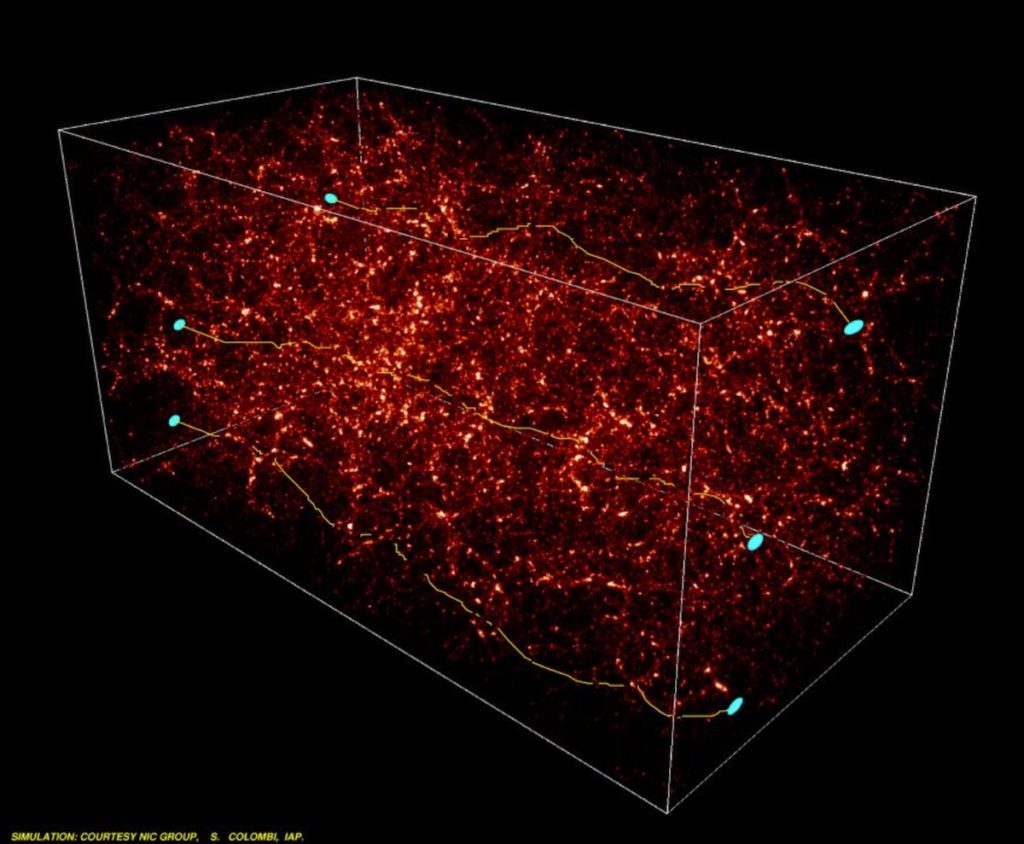

In the 1980s, observations of galaxy clusters with CCD cameras led to the discovery of the first gravitational arcs. These are images of distant galaxies which are, incredibly, magnified by the passage of light near the cluster. The deflection of light by mass is one of the key predictions of Einstein’s theory of general relativity. The grossly distorted images can only be explained if a large part of the mass of the cluster is concealed in invisible or ‘dark’ matter. However, in current models of galaxy formation, the observed growth of structures in the Universe can only be explained if this dark matter is distributed throughout the Universe and not only in the centres of galaxy clusters. This means also that even the shapes of galaxies of the ‘cosmic wallpaper’ throughout the night sky should be very slightly correlated, as light rays from these distant objects pass close to dark matter everywhere in the Universe. The effect would be tiny, but it should be detectable.

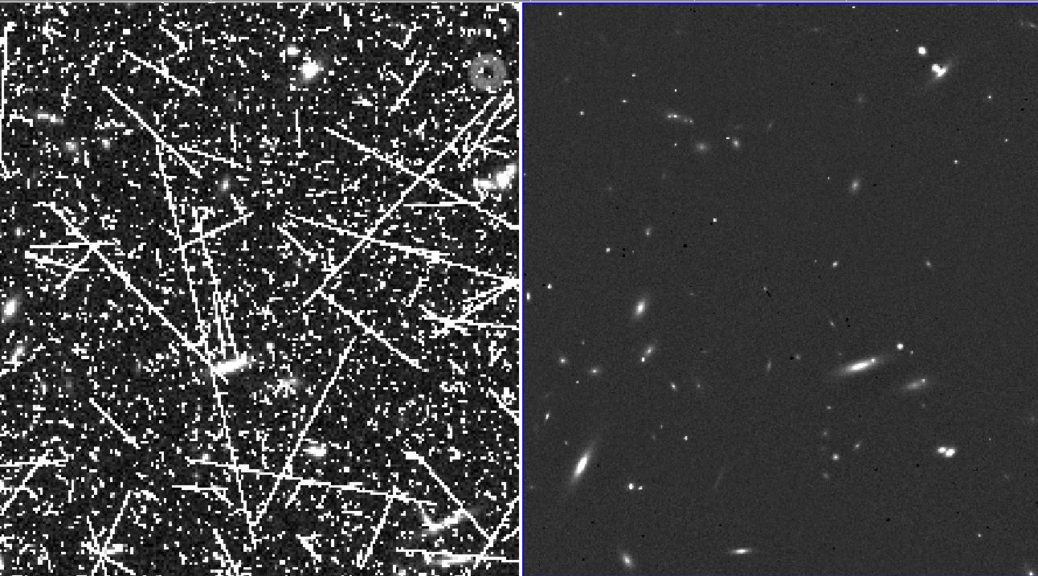

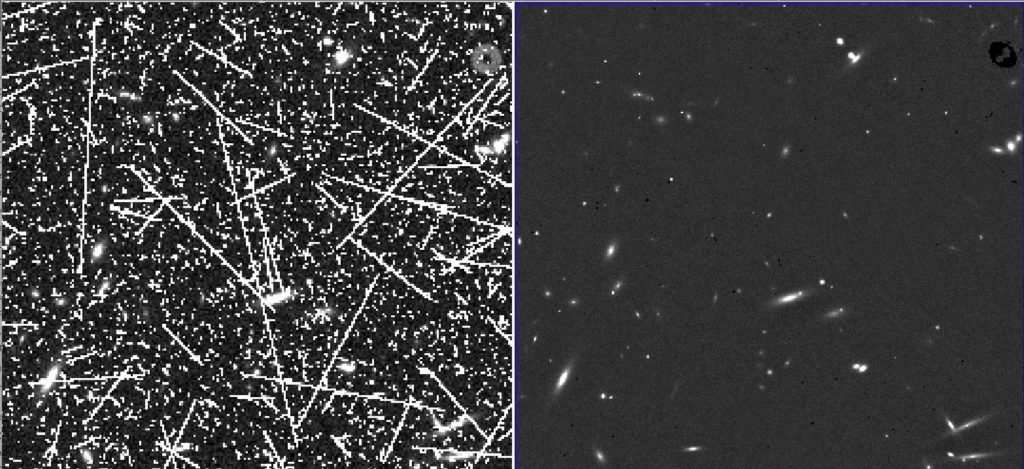

Around the world, several teams raced to measure this effect in new wide-field survey camera data. The challenges were significant: the tiny effect required a rigorous control of every source of instrumental error and detailed knowledge of telescope optics. But by the early 2000s, a few groups had measured the ”correlated shapes” of faint galaxies. They also showed that this measurement could be used to constrain how rapidly structures grow in the Universe. At the same time, other groups, using the first wide field spectroscopic surveys, found that measurements of galaxy clustering could be used to independently constrain the parameters of the cosmological model.

Halfway through the first decade of the 21st century, it was beginning to become clear that both clustering combined with gravitational lensing could be an excellent technique to probe the nature of the acceleration. Neither method was easy: one required very precise measurements of galaxy shapes, which was very hard to do with ground-based surveys which suffered from atmospheric blurring; the other required spectroscopic measurements of hundreds of thousands of galaxies. And both techniques seemed highly complementary to supernovae measurements.

In 2006, the report from a group of scientists from Europe’s large observatories and space agencies charted a way forward to understand the origin of the acceleration. Clearly, what was needed was a space mission to provide wide-field high-resolution imaging over the whole sky to measure the shapes, coupled with an extensive spectroscopic survey. Both these ideas were submitted as proposals for two satellites: one would provide the spectroscopic survey (SPACE) and the other would provide the high-resolution imaging (Dune). The committee, finding both concepts compelling, asked the two teams to work together to design a single mission, which would become Euclid. In 2012, the mission was formally approved.

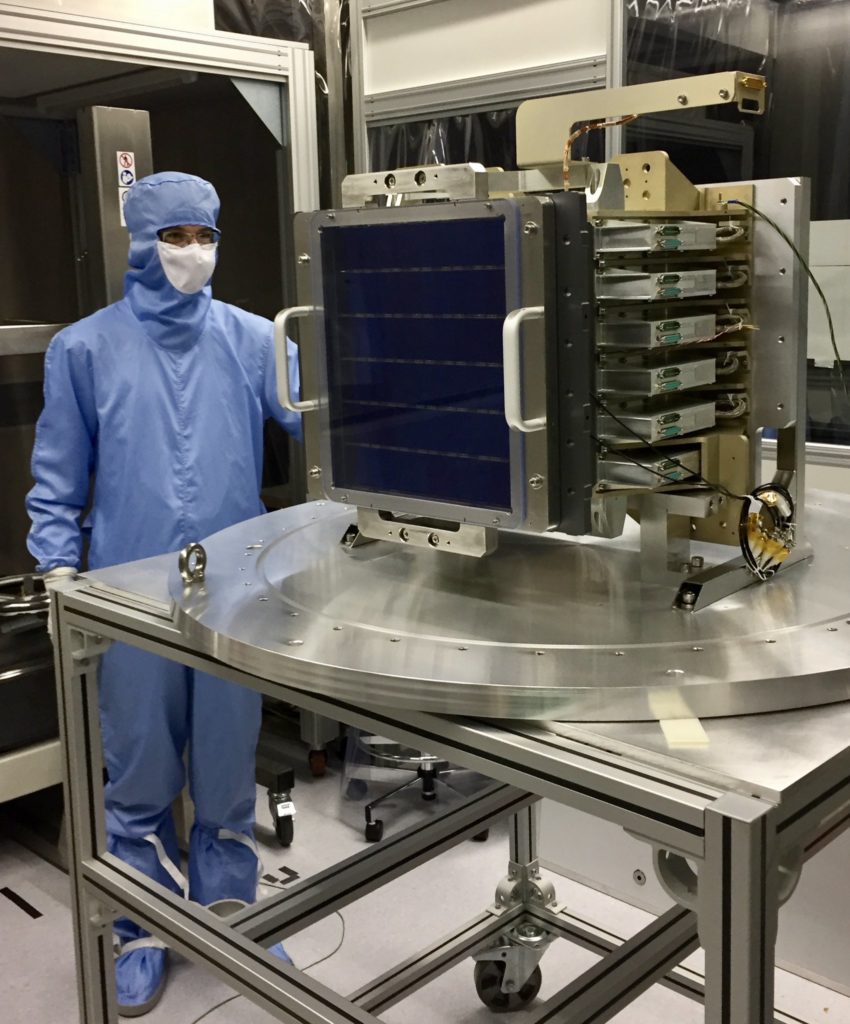

Euclid aims to make the most precise measurement ever of the geometry of the Universe and to derive the most stringent possible constraints on the parameters of the cosmological model. Euclid uses two methods: galaxy clustering with the spectrograph and imager NISP (sensitive to dark energy) and gravitational lensing with the imager VIS (sensitive to dark matter). Euclid‘s origins in ground-based surveys makes it unique. Euclid aims to make a survey of the whole extragalactic sky over six years. But unlike in ground-based surveys, no changes can be made to the instrument after launch. After launch, Euclid will travel to the remote L2 orbit, one of the best places in the solar system for astronomy, to begin a detailed instrument checkout and prepare for the survey.

I have been involved in the team which will process VIS images for more than a decade. The next few weeks will be exciting and stressful in equal measures. VIS is the “Leica Monochrom” of satellite cameras: there is only one broad filter. The images will be in black-and-white. It will (mostly) not make images deeper than Hubble or James Webb: Euclid‘s telescope mirror is relatively modest (there are some Euclid deep fields, but that is another story). But to measure shapes to the required precision to detect dark matter, every aspect of the processing must be rigorously controlled.

VIS images will cover tens of thousands of square degrees. Over the next few years, our view of the Universe will dramatically snap into high resolution. That, I am certain, will reveal wonders. Those images will be one of the great legacies of Euclid, together with a much deeper understanding of the cosmological model underpinning the Universe that will come from them and the data from NISP.

This Thursday, I’ll be travelling to Florida to see Euclid start its journey to its L2 orbit for myself. I’ll be awaiting anxiously with many of my colleagues for our first glimpse of the wide-field, high-resolution Universe that will arrive a few weeks later.