On seeing the Euclid launch

In July, I had the great luck to visit the Kennedy Space Flight Center to see the launch of the Euclid satellite. I wrote this a few days after the launch, but with the great amount of work that we have all been doing since then, I have not had time to publish this! I was thinking I had better get caught up…

My trip to Florida started inauspiciously enough, with a text message telling me that my flight to Newark, the first leg of my journey, had been delayed. This meant that I would miss my connection to Orlando. At CDG the united staff told me that, although they couldn’t rebook my flight from Paris, don’t worry, in Newark they will look after you. I imagined disembarking from the plane and walking right to the smiling United representative at the service desk who would immediately put me on the next available flight. The reality was a four-hour wait in the line-up at Newark until finally a very helpful lady booked me on a flight to Orlando the next morning. I arrived in the humid Florida heat on Friday with enough time to pick up my tickets and those of my colleagues. I will pass in silence over the night spent in a hotel in the grey post-industrial suburbs of New Jersey.

I admit that I had a certain ‘frisson’ seeing the signs for ‘Kennedy Space Flight Center’ (KSFC) as I drove away from Orlando airport. As a child in Ireland in the 1970s I wrote to KSFC and asked them to send me stuff about space and planets. Soon enough, a big wad of old press releases as well as some nice colour pictures of planets arrived from America in a big yellow envelope. It was wonderful. I couldn’t understand why more people were not interested in astronomy given the Universe was so large and the Earth was so small. And so now, 40 years later, I was on a highway heading right to KSC. Soon enough, I was flying over a large river inlet and in the distance I could see what I knew was the famous Vehicle Assembly Building, the largest building in the world. But right now, I was not going to KSC, I was only going to the hotel to get the tickets…

The next morning, I left the hotel at 07:20. The launch was scheduled for 11:12AM, but I knew that My bag was full of cameras and factor 50 sunscreen. I had a big hat I bought at the surf shop near my hotel, ready for the intense heat and light of a summer morning in Florida. I picked up a colleague at his nearby hotel.

Although we had left early, there were already many people at Kennedy for the launch. It was blisteringly hot, and the sun shone brightly in a blue sky without clouds. Although I have been working on the Euclid project for more than a decade and have been to every consortium meeting (except one), there were many people I had never seen before.

Soon enough, we left on the first bus carrying everyone to the bleachers at banana creek, a prime viewing location across the water from the launch towers. The bleachers, however, had not an inch of shade, and it was more than an hour until the launch; no question of staying outside. But next to the bleachers was the Saturn V Apollo building, and we spent a good hour there looking at this impressive space hardware from the past. Soon it was time to go outside again

At the bleachers, everyone was finding their places. There was blinding heat and light. To the right, there was a big screen relaying the SpaceX/ESA livestream, and a local commentator provided us some additional context. I stared out at the horizon at where the Euclid will soon leave the Earth. Confusingly, there were several different launch towers.

And suddenly, we were only a few minutes before launch. We were in the bleachers. I had promised to do a livestream with IAP auditorium where everyone was gathered to watch the launch. I put in my AirPods and my colleague Amadine tried to film me with my iPhone. There was so blinding light everywhere it was impossible to see the screen, but I could hear the questions from Paris and I tried to formulate some intelligent answers.

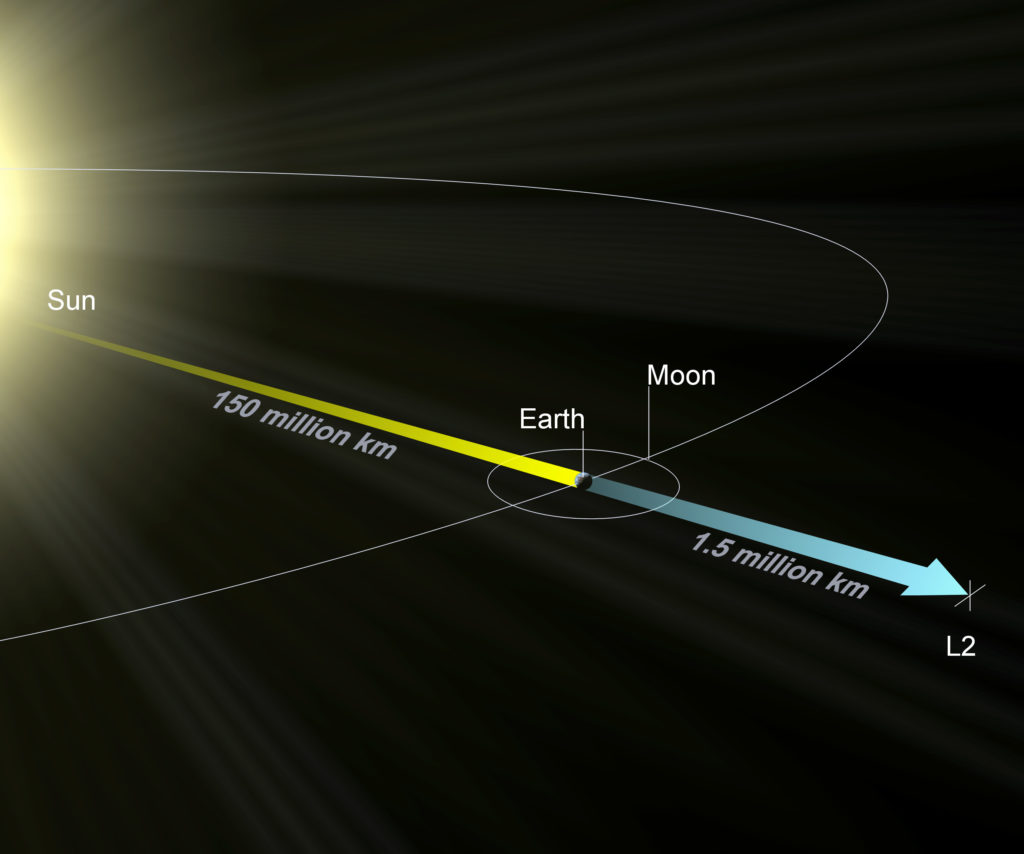

We were in the final minutes before the countdown. No sign of a hold or a delay. There were no clouds in the sky. We were told there was a delay between the livestream and the real world, a few seconds, not much but enough to make everyone chanting countdown pointless. We didn’t know the real-world number of minutes left. Suddenly, there was an enormous cloud on the horizon, low down, and rising from the clouds we could see the Falcon 9 rocket atop a bright orange-yellow flame. It was one of the brightest things I have ever seen. But at first it was completely silent as the rocket arched up into the sky. Then the sound came, a great rolling rumbling wave of sound energy. My cheap straw hat vibrated in time to the roar of the nine merlin rocket engines. Clearly, it takes a lot of energy to get a one-ton rocket to L2, I thought to myself.

I was transfixed. In the bag at my feet I had my two Leica cameras, I had my telephone and a Ricoh compact camera too, but I didn’t want to do anything but look at this bright orange candle as it disappeared into the cloudless sky. On the callout from the screen we heard ‘maxq’ indicating the rocket had passed through the zone of maximum aerodynamic pressure. And then, it was gone, and there was finally just a cloud in the sky, a cloud of water vapour made by the Falcon 9 rocket.

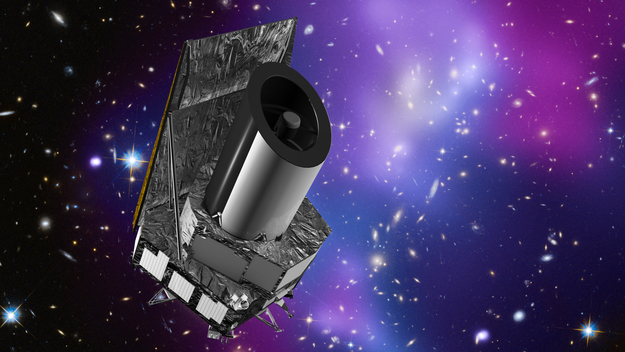

The livestream from ESA and SpaceX continued. We saw the booster coming back, landing on the drone ship. There in orbit was a short coast phase and the second stage ignited again. By this time, all the public had left and there were only a few of us in the bleachers or sheltering nearby. Then, on the “jumbotron” — the big screen they have there — we saw Euclid deploy, the silvery yellow foil catching the sunlight as at it separated from the SpaceX second stage. But still, the story was not over. Was the satellite alive and communicating with the Earth? Then finally on the screen we saw the first signal from the spacecraft. Euclid was alive and heading to L2. And the real work would start very soon.